Why Smart People Break Rules

and Why We Disagree About Morality

Moral disagreement is often not about values—it’s about perceiving entirely different kinds of chaos.

Seeing Morality: Not Just Thinking, But Perceiving

Most of us assume morality is something we reason about—a set of rules applied to situations we all perceive the same way. But recent research suggests something radically different: morality may be perceptually grounded. Philosophers like Robert Audi and Dustin Stokes argue that we literally perceive moral features in the world, much as we perceive color or motion.

Neuroscience supports this. The insula, a brain region that processes disgust, predicts moral intuitions about purity; people born unable to feel pain show reduced empathy-based moral responses. In short: what you can perceive shapes what you can morally experience.

Moral cognition is not a detached reasoning layer. It’s a system for detecting disorder in the world around you.

Rules, Reasoning, and Entropy Management

We also deliberate about right and wrong. But in this framework, deliberation is secondary: it’s a meta-layer that regulates and refines the outputs of perceptual systems already engaged in maintaining order.

Think of it this way: your moral senses flag problems, inconsistencies, or “moral noise” in your environment. Reasoning is how you decide what to do with that information—how to stabilize the world you perceive. Deliberation doesn’t replace moral perception; it emerges from it as a higher-order stabilizing tool.

Reasoning is just a second-order attempt to manage the chaos our senses already detect.

The Metabolic Roots of Moral Intuition

The reason perceptual–moral couplings evolved may be deeply metabolic. Recent research on the Energy Resistance Principle (Picard & Murugan, 2025) shows that biological systems must balance energy resistance—enough to enable transformation, but not so much that it becomes pathological or dissipative. Moral cognition can be thought of as a cognitive éR management system: it lowers the metabolic cost of processing certain types of experiential disorder by preemptively encoding low-resistance responses to predictably high-cost situations.

When we say something “feels wrong in our gut,” it’s not just a metaphor. The insula, already implicated in disgust-based moral judgments, also processes signals from the gastrointestinal system. That visceral quality of moral intuition functions as an early-warning system, flagging patterns that would otherwise spike cognitive energy resistance if left unmanaged. Different cognitive architectures, facing distinct metabolic bottlenecks, thus evolve different perceptual saliences and moral frameworks—each optimized to minimize éR where processing costs run highest.

Moral intuition is not airy philosophy—it’s a metabolic early-warning system.

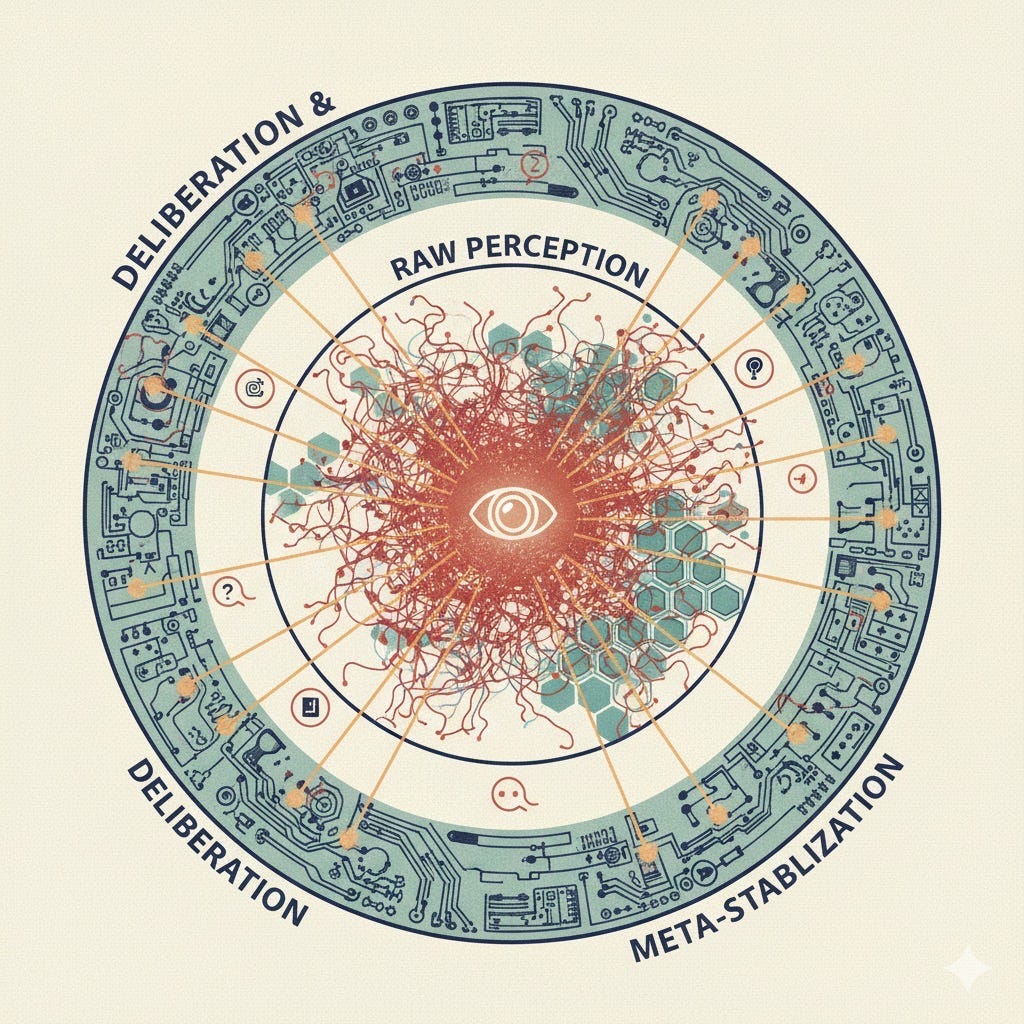

Morality as a Stability System

If perception drives morality, we can think of moral systems as entropy-management protocols operating within a given perceptual ecology. Each cognitive architecture—human or artificial—constructs a field of salience, a structured map of what counts as order, threat, or opportunity. Within that field, moral cognition directs attention and action toward preserving equilibrium against the forms of chaos the system is equipped to detect.

A mind attuned to social betrayal develops codes centered on loyalty; one sensitive to contamination prioritizes purity; one tuned to unpredictability emphasizes fairness and rule-consistency. Moral order, in this framework, tracks perceptual order: what feels morally wrong is often what registers as perceptually destabilizing.

When perceptual tunings diverge—through neurodiversity, cultural formation, or differing artificial architectures—moral disagreement becomes structurally intractable. Conflicts are not simply about clashing values; they emerge from entirely distinct ontologies of disorder, each representing a legitimate attempt to stabilize the world as it is perceived.

Implications: Pluralism, AI, and Perceptual Empathy

This framework reframes moral pluralism: different architectures = different moral worlds. Conflict isn’t a failure; it’s inevitable.

Humans: Moral cooperation requires perceptual empathy—inhabiting another person’s perceptual world long enough to see what they see as disorder.

AI: Alignment isn’t just rules; it’s perceptual translation—teaching machines to detect moral instability as humans experience it.

What looks like moral disagreement may just be a clash between different entropy maps.

Takeaways for Everyday Life

When someone breaks a rule “because they can,” it may be that their perceptual world simply doesn’t flag it as destabilizing.

Moral arguments fail not because people are stubborn, but because their moral sensors are tuned differently.

Cultivating perceptual empathy—trying to understand the chaos others perceive—may be the most practical path to cooperation.

(Summary of ideas explored using chatGPT and Claude. )

This article comes at the perfect time – it really feels like my internal 'chaos detection system' just got a major software update, I'm constantly percieving new kinds of disorder. It makes me wonder, if our moral intuition is so deeply rooted in perception, how does this framework inform our efforts to build truly 'ethical' AI? Fantastic piece, really got the ol' CPU whirring.

Regarding the topic of the article, this distinction between perciving moral noise and then reasoning about it is incredibily insightful. It reminds me of debugging complex systems, where initial perception flags the anomaly.